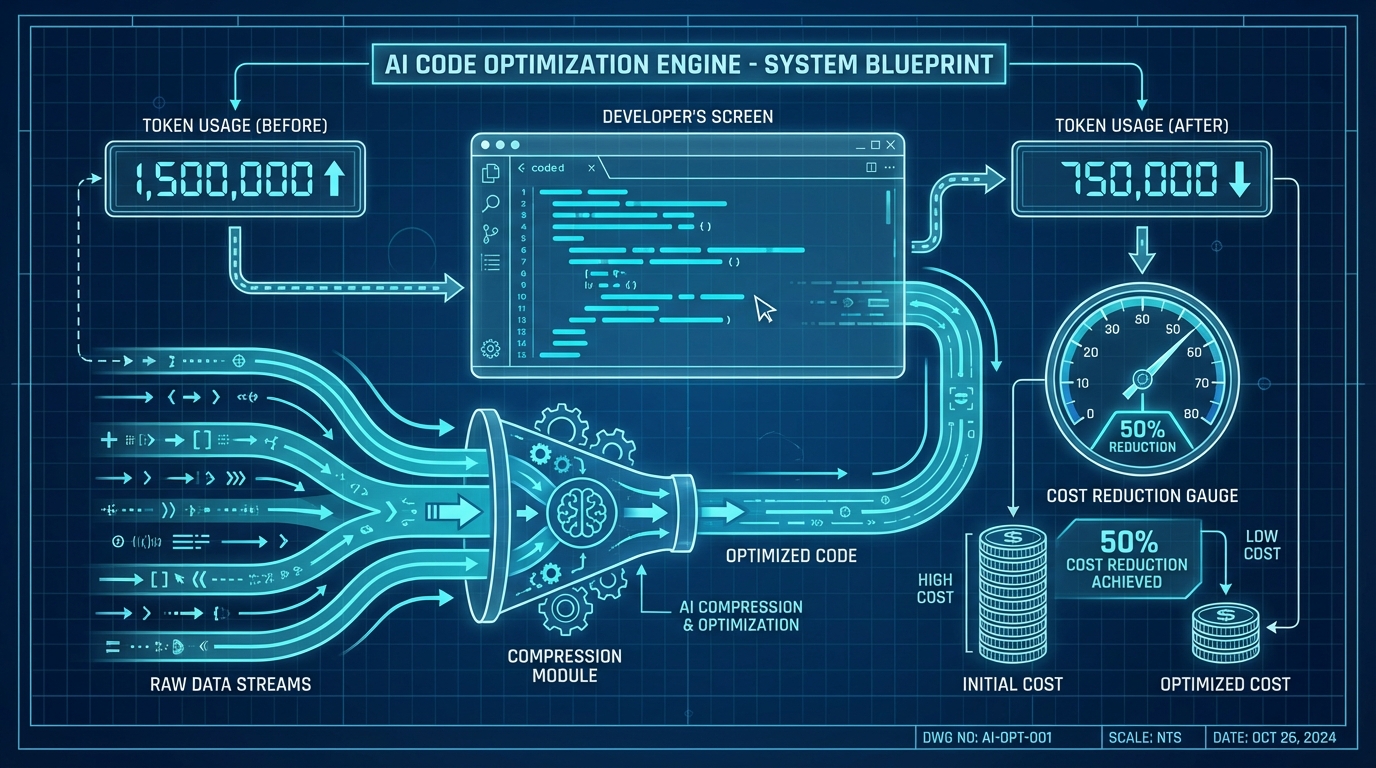

How to Reduce AI Coding Tool Token Usage by 50%

April 3, 2026

Most AI coding tools waste 60-80% of tokens on context retrieval, not code generation. You can reduce AI coding tool token usage by half with three changes: trimming system prompts, using targeted file reads, and routing tasks to cheaper models. The math is not complicated once you see where the money actually goes.

Where Your Tokens Actually Go#

Your $200/month AI coding bill is mostly the AI reading files, not writing code. Context loading, system prompts, and tool call overhead dominate token counts in almost every session I've profiled. Generation, the actual output you want, is typically 20-40% of the total.

A single complex Claude Code session with Opus can hit 500,000+ tokens. At API rates that's $15 or more for one session. The breakdown almost always looks the same: system prompt (~15%), file reads (~40%), tool call scaffolding (~20%), and actual generation (~25%).

Where Tokens Go in a Typical AI Coding Session

The implication is blunt: optimizing generation quality is the wrong target. You optimize context.

The Real Pricing Math Across Providers#

Subscriptions and API billing are not the same product. Claude Code Max gives you 10 billion tokens for $100/month, the equivalent of roughly $15,000 at direct API rates for Opus. That's not a rounding error, that's a different category.

Any AI coding tool pricing comparison starts with this table:

AI Coding Tool Pricing Tiers (2026)

The breakeven calculation matters more than the sticker price. If you're running more than 3-4 substantial agentic sessions per day, a subscription tier almost always wins over API billing.

Pricing FAQ: Subscriptions vs. API

The tool you pick for inline completion does not need to be the same tool you use for agents.

Trim Your System Prompt#

CLAUDE.md token management is the single highest-impact change you can make. Every token in that file re-fires on every single tool call, not just every session. An 800-token CLAUDE.md running across 200 tool calls in one session costs you 160,000 tokens before the model writes a line of code.

Trimming context rules from verbose to directive cuts costs by around 21%. Here's what that looks like in practice:

Before (812 tokens):

# Project Guidelines

When working on this codebase, please make sure to always follow our established patterns

for component architecture. We use a monorepo structure with apps in /apps/<name>/ and

shared configuration at the root level. All React components should be functional components

using hooks. We prefer TypeScript strict mode and all new files must include proper type

annotations. When writing tests, use Vitest for unit tests and Playwright for end-to-end

tests. For styling, we use Tailwind CSS — please don't introduce new CSS files. Database

queries should go through our ORM layer, never raw SQL. Please also make sure to check

the existing components before creating new ones to avoid duplication. Commit messages

should follow conventional commits format. All API routes need input validation using Zod.

After (178 tokens):

# Rules

- Monorepo: apps in /apps/<name>/, shared config at root

- React: functional components, TypeScript strict

- Tests: Vitest (unit), Playwright (e2e)

- Style: Tailwind only

- DB: ORM only, no raw SQL

- Commits: conventional commits

- APIs: Zod validation required

- Check existing components before creating new ones

Same information. 78% fewer tokens. The model doesn't need prose framing, it needs the rule.

For more on structuring project instructions, see 15 Claude Code Tips That Actually Make a Difference.

Read Less, Generate More#

Targeted file reads are 10-50x cheaper per read than loading full files. The difference between asking for lines 40-60 of a 400-line file versus the whole thing is the difference between 20 tokens of context and 400 tokens of noise.

The patterns that actually reduce token burn in practice:

- Use

/compactafter completing a large subtask. It summarizes conversation history and can recover significant context space. - Use

/clearwhen switching to a completely unrelated task rather than continuing in the same session. - Avoid asking Claude Code to "look at" a file when you can paste the 5 relevant lines yourself.

- Specify line ranges explicitly: "read lines 45-80 of src/auth/middleware.ts" instead of "read the auth middleware."

- Disable MCP tools you aren't actively using. Too many installed tools shrinks the effective context window from 200K to around 70K tokens.

The skills architecture described here is the right long-term pattern. Progressive disclosure of project context, rather than dumping everything upfront, recovered roughly 15,000 tokens per session in documented benchmarks. An 82% improvement in usable context.

Session discipline matters as much as prompt discipline. A session that started with a React component refactor should not still be running when you switch to debugging a database migration.

Route Tasks to the Right Model#

60-70% of agent actions don't need Opus. Switching to a cheaper model for those tasks cuts costs 5-8x without meaningfully affecting output quality.

The tasks that work fine on Haiku or Sonnet:

- Linting and formatting fixes

- Boilerplate generation with a clear template

- Simple test generation for pure functions

- Documentation updates to existing content

- File renaming and import path updates

- Generating

console.logdebug statements

The tasks that genuinely need Opus:

- Architectural decisions across multiple files

- Debugging multi-layer race conditions or async errors

- Complex refactors that touch more than 5 files

- Writing new algorithms without an existing reference

Relative Cost per 1M Tokens by Model (Claude API)

I default to Sonnet for almost everything and switch to Opus only when a task fails. That single habit cut my API spend by more than half before I changed anything else.

The Cursor vs Claude Code cost gap gets wider the more you default to the highest tier. Model routing is not a cost optimization trick. It's the correct default behavior.

The Tool-Rotation Playbook#

No single AI coding tool is best at every task. The most cost-effective setup uses multiple tools for what each one actually does well.

My current rotation:

GitHub Copilot Pro#

At $10/month, this handles inline completions all day. It's fast, invisible, and the 300 premium request limit doesn't matter for autocomplete.

Claude Code Max#

At $100/month, this runs all agentic work: multi-file refactors, debugging sessions, spec-driven feature implementation. The Claude Code token optimization payoff is real at this tier because long sessions stay viable.

OpenAI Codex#

This handles batch processing jobs I don't need interactively. Generating test fixtures, bulk renaming, filling in repetitive scaffolding across many files.

The key constraint is context hygiene per tool. Claude Code sessions that bloat because you forgot to /clear are expensive. Codex batch jobs run with explicit, minimal context by design.

Running Copilot for completions does not mean you're not getting value from Claude Code. They solve different problems at different points in a workflow, and splitting the load avoids hitting usage limits on any single tool. For teams trying to control AI tooling costs without cutting capability, this stack costs around $110-130/month per developer versus $200+ for a single-tool max tier approach.

The token waste problem is mostly a context discipline problem. Pick the right tool for the task size, trim your system prompts, read targeted file ranges, and route simple work to cheaper models. Developers who apply all four habits consistently report cuts closer to 65-70%.