Senior Devs Are Going All-In on Prompt Style Coding

April 1, 2026

Prompt style coding was supposed to be the great equalizer. Give juniors AI tools and watch the gap close. But the data tells a different story: senior developers with 10+ years of experience ship 2.5x more AI-generated code than their junior peers. The assumption got it backwards. Experience is the multiplier, not the thing being replaced.

The Adoption Numbers Are Wild#

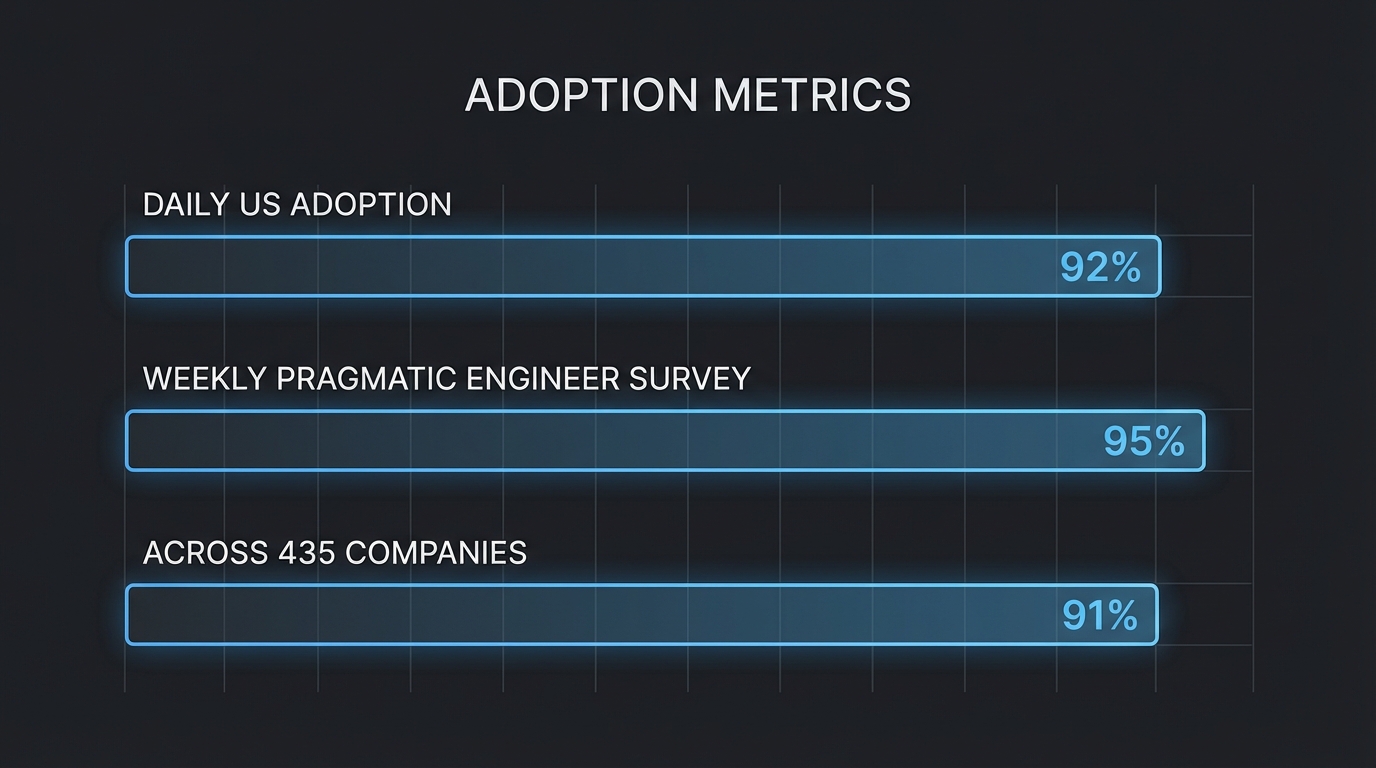

92% of US developers now use AI coding tools daily. Globally, 82% use them weekly. These numbers stopped being surprising about six months ago.

The Pragmatic Engineer's 2026 survey of 906 developers paints an even sharper picture. 95% use AI tools weekly or more. Three-quarters say AI handles over half their engineering work.

This isn't early adoption anymore. It's the baseline.

AI-Generated Code by Experience Level

Across 435 companies and 135,000+ developers, DX's Q4 report found 91% adoption. Daily users ship 60% more PRs than non-users (2.3 vs 1.4 per week). Staff+ engineers save 4.4 hours weekly.

That last stat matters most. The people with the deepest context are getting the biggest returns.

From Vibe Coding to Agentic Engineering#

Andrej Karpathy coined "vibe coding" in February 2025. The term caught fire. Developers talked about surrendering to the AI's flow, accepting whatever the model produced, fixing bugs by describing them rather than reading stack traces.

Twelve months later, Karpathy declared the term "passe" and proposed "agentic engineering" instead. The shift in language tracks a real shift in practice.

Vibe coding was about letting go. Agentic engineering is about directing. Senior developers figured this out fastest because they already knew what good architecture looks like.

They aren't vibing. They're orchestrating multiple AI tools with specific intent, building project rule files, and treating the AI as a junior pair programmer who needs constant steering.

An 8-year senior engineer vibe-coded a full app in 48 hours and concluded: "AI builds for now, not for later." That single observation captures why experienced developers approach these tools differently. They think about what happens six months after the code ships.

Why Seniors Prompt Better Than Juniors#

Domain knowledge acts as a prompt amplifier. When a senior developer asks an AI to "add rate limiting to the API gateway," they already know what questions to anticipate: per-user vs global limits, sliding window vs fixed, Redis vs in-memory. The AI gets a tighter problem space and returns better results.

This is the pattern I keep seeing in the Pragmatic Engineer data: 63.5% of Staff+ engineers regularly use AI agents, compared to 49.7% of regular engineers. Seniority correlates with agentic tool adoption, not just autocomplete usage.

The most interesting development is what senior devs call "prompt architecture." They create CLAUDE.md and AGENT.md files that persist project rules across AI sessions. Code style preferences, architecture constraints, testing patterns.

Each session starts with the AI reading these files and operating within the guardrails. If you want to go deeper on this, I wrote about turning project patterns into reusable commands that builds on exactly this idea.

Power users on Reddit converge on a multi-tool approach:

- Copilot for inline autocomplete (fast, low-friction)

- Claude for deep reasoning about architecture decisions

- Cursor for large multi-file refactors

- Terminal-based tools like Claude Code for orchestrating complex tasks

No single tool wins every scenario. The Claude Code satisfaction data is telling: 46% most-loved rating versus 19% for Cursor and 9% for Copilot.

Most senior devs I talk to use all three in a given week. Check out 15 Claude Code tips that actually make a difference if you're building out that stack.

The METR Paradox#

In July 2025, METR published a randomized controlled trial that sent shockwaves through the discourse. Experienced open-source developers were 19% slower with AI tools while believing they were 20% faster. The perception gap was staggering.

Critics ran with the headline. But the study had 16 participants and 246 tasks across deeply familiar codebases. That second detail matters.

These developers already had the code in their heads. AI tools added overhead without adding knowledge.

METR's own follow-up in February 2026 acknowledged a critical selection bias: developers who refused to work without AI were excluded from the study. The most AI-dependent engineers never entered the dataset. That changes the interpretation significantly.

Common Objections to AI-Assisted Coding

The real takeaway from METR isn't that AI tools are useless. It's that they help most when you're working outside your comfort zone.

Familiar codebase? You might be faster alone. New domain, unfamiliar framework, complex integration?

That's where prompt style coding delivers.

What This Changes for Your Team#

Stop treating AI tools as training wheels for juniors. Start treating them as power tools for your most experienced engineers. Here's what that looks like in practice:

- Create rule files. Every repo should have a

CLAUDE.mdor equivalent that encodes your team's architecture decisions, naming conventions, and testing requirements. This is the highest-impact investment you can make in AI-assisted development right now. - Build a hybrid tool stack. Don't mandate one AI tool. Let developers combine autocomplete, reasoning, and agentic tools based on the task. You can even run Claude Code with local models for sensitive codebases.

- Measure PR throughput, not AI usage. The DX data shows daily AI users ship 60% more PRs. Track output, not input.

Your most experienced developers don't need to be gatekept from these tools. The Fastly data proves the opposite. Your most experienced developers generate the highest return on AI investment.

The 22% of merged code that's now AI-authored across 266 companies isn't going back down. The question isn't whether your team adopts prompt style coding. It's whether your best engineers are set up to do it well.